The Beginning

The Beginning.

I have always loved building computers and making them run Linux and do a variety of things. For me, it’s the perfect excuse to get some hardware (we all need hobbies) and maybe learn some stuff in the meantime. I think the mindset is more “can i run it?” than “should I?”. But that’s not necessarily a bad thing.

In the last couple of years I have obtained some “mini-pcs” (Ryzen 4650GE, 64GB RAM), which have served me greatly as an hypervisor to run Proxmox on, with plenty of horsepower, though under-utilized. You know, running the typical stuff (Pihole, maybe some samba server…), all fine and dandy so far.

Then I realized IPv6 is here and I knew absolutely nothing about it apart from the basic stuff CCNA teaches you.

I started building the lab a bit more seriously to get some IPv6 knowledge - got a VM in the cloud, a routed /56 from a VPS provider and then I was good to go, at least for some time, to learn the basics. Then I decided I want to build a network that’s actually present in the DFZ, participating in IXPs and peering with others… Now here I am :D. Thanks to Freetransit for the PA IPv6 space and the ASN sponsoring.

Writing this introduction is somewhat boring for me, I prefer the meaty details.

The Network at AS203528

Software

I have found VyOS to be a very nice little router. The syntax for me is really simple coming from the SP-world (VyOS syntax is like the offspring of IOS and JunOS). It can do a bit of everything, with very good performance. Don’t expect things to be flawless though, weird bugs will occur if you are running nightly build. No showstoppers though.

If I need some more advanced firewalling, just add a sprinkle of OPNSense VMs as needed.

Hardware

About the hypervisors, at OSR1 site I have 3x (Ryzen 4650GE, 64GB RAM) - with additional USB3 NICs or external HDD boxes as needed. OSR2 is a simple i3 8109U NUC with 64GB RAM and multiple NICs.

For GbE at home I stick with some basic managed switches - Layer2 only. Ports facing the VM Hosts are always 2xGbE in a static bundle.

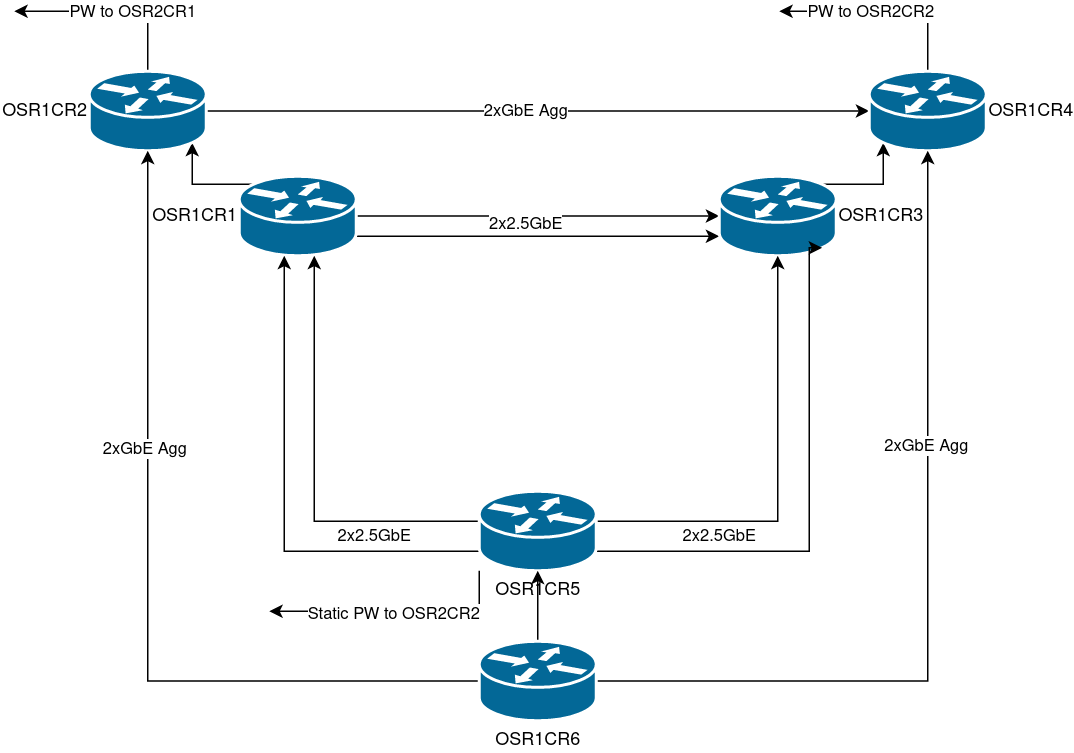

Now, GbE does not cut it anymore. But costs must be saved somehow. The Ryzen hosts each have 2x2.5GbE USB NICs (RTL 8156B), all connected to a simple unmanaged QSW-1108-8T switch. By creating multiple virtual interfaces, all on different VLANs, I can just pretend there are many point to point links (e.g. port 1 on host 1 has VLAN 10 to port 1 host 2 and VLAN 11 to port 1 host 3, port 2 host 1 has VLAN 20 to port 2 host 2 and so on…) connecting my hosts over the switch. This allows for very very easy ECMP, I can get around 4.5 Gbit on IPerf testing between them.

Routers

Each VM host has two “core routers”, the odd numbered routers deal with 2.5GbE virtual connections, the even routers with GbE. All routers within each VM host are uplinked virtually to both core routers on the host. IS-IS is run as an IGP, with a route reflector per VM host. Only links and loopbacks are in the IGP, rest is all within iBGP.

These routers are within my private network, behind a firewall.

Each VM host has a dedicated “access router” providing the gateway for the respective VM host LAN segment. Each LAN segment is backed up by another access router, using HSRP (through the GigE managed switches). The idea is to keep intra-host comms on the host itself, and inter-host comms over the 2x2.5GbE network, keeping GigE backup for HSRP and in case the USB NICs or unmanaged switch dies. The idea is to keep each router doing separate and simple stuff, to allow for almost seamless upgrades and maintenance.

Layout of inner network at OSR1

Layout of inner network at OSR1

The connectivity between OSR1 and OSR2 is over GRETAP “pseudowires” running over AS203528 - this way I avoid creating even more encrypted tunnels if I already have AS203528 present.

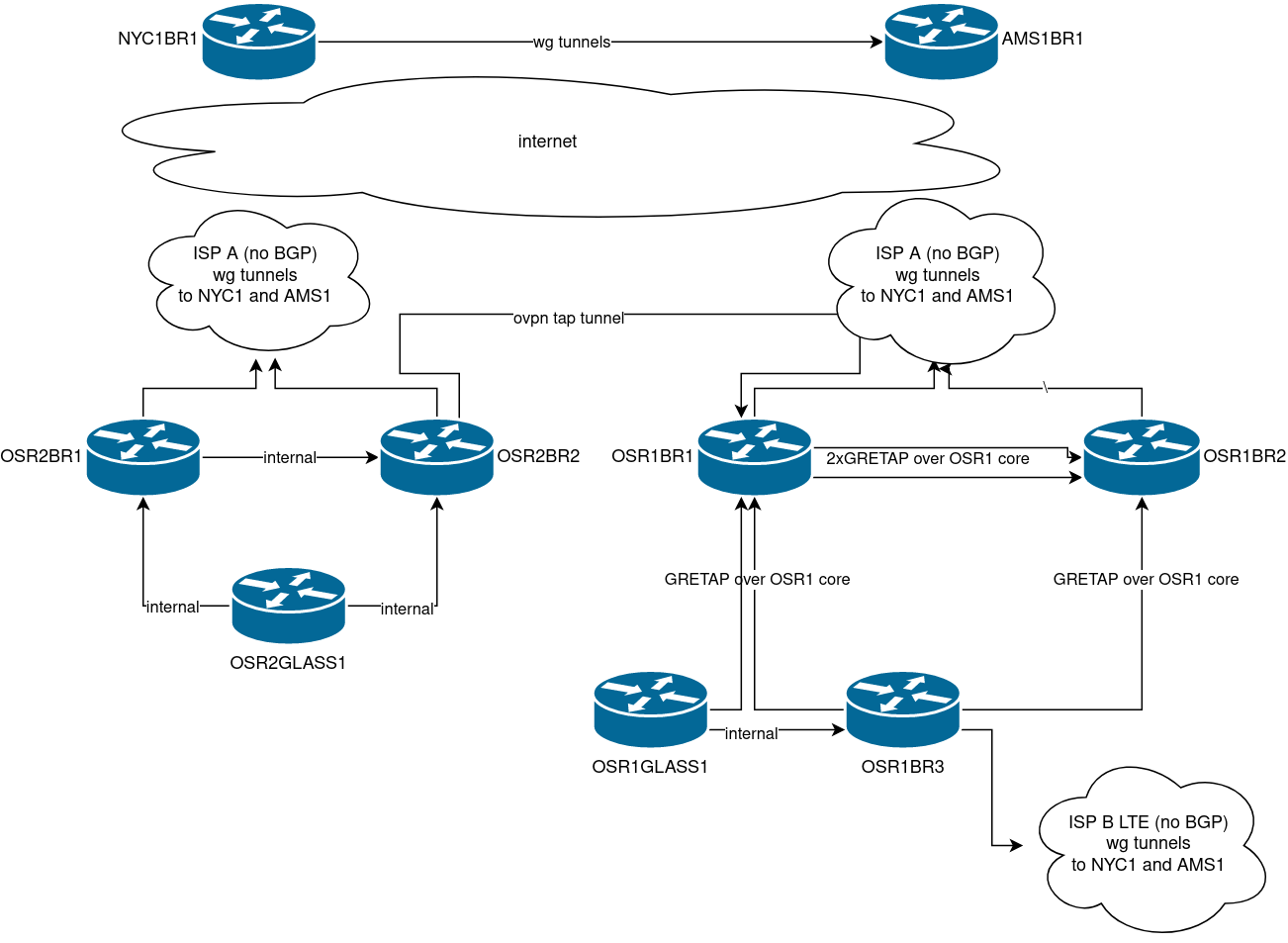

AS203528 Network Design

The network is built with several “Border Routers”, both at OSR1 / OSR2 locations, and at NYC1 / AMS1 (the latter is actually now at Dusseldorf…) These routers hold full IPv6 tables, their internal IPv4 network is tightly firewalled. They are connected over the internet using either wireguard tunnels, or OpenVPN TAP (in case the VPN needs to be terminated in a router that does not hold full v6 tables - it can be bridged to a BR then). Within OSR1 however the links between the public border routers are logical and built over the OSR1 private core using GRETAP.

OSPF (with BFD) is used as a routing protocol for v4 as it runs fine over Wireguard. The routers are fully iBGP meshed. Because tunnels over the internet are not really stable, if for example you have full tables and then one of your IGP adjacencies for ipv6 starts flapping (very common - it’s public internet) then CPU usage will spike as the router struggles with all the next hops changing. To solve this, I am running a full mesh of GRETAP (v4) tunnels on top of my wireguard/ovpn tunnels, IS-IS runs on top of these tunnels and all IPv6 traffic is traversing these tunnels instead. If the OSPF adjacency goes down on a wireguard tunnel then the traffic will quickly be re-routed, the IS-IS adjacency on top of the GRETAP tunnel will not notice it, and the quality of service is a million times better.

The full mesh of tunnels was generated using this script

Transits

- OSR1BR1: Freetransit (AS41051) – this is shut down on my end, they have an intermittent fault for several weeks already.

- OSR1BR2: Tunnelbroker.ch (AS58057)

- AMS1BR1: Servperso (AS34872)

- NYC1BR1: FranTech (AS53667)

Peerings

- OSR1BR2:

- 4IXP

- AS212925 over Tunnel

- OSR2BR1:

- AS59645 over Tunnel

- AMS1BR1:

- LocIX Dusseldorf IXP

Network Logo

As you can see, graphic design is my passion so I went for a wordart-inspired Beef Cafe.

Summary

I have covered a bit how all is going so far. The idea of this blog is to document whatever interesting stuff I do in the future. This intro is just something I wanted to just deal with ASAP.

For any feedback just email me to either address listed on PeeringDB :)